Performance Testing

Does your product work as expected? Run a Performance Test and find it's bottlenecks.

What Is Performance Testing

Performance Testing is a practice that helps to identify if your product will perform well under a specific workload. The test tries to detect malfunctioning product parameters. Those parameters should be adjusted during the first two product life cycles (development or introducing stage). The parameters are called Service Level Agreements and they are a basis for the Performance Testing. Testing can be performed in a lab (quantitative testing) or in a production environment. It typically tests the speed, data transfer rate, responsiveness, scalability (maximum user load the product can handle), stability (if the product is stable under varying loads), and reliability of the product. It is a strictly automatized process.

Testers tend to focus more on the product’s functionality and sometimes neglect the Performance Testing. Performance Testing is an important part of the product development, even though the testers operate separately from the developers. The developers and operations run and maintain the product until it reaches the performance expectations.

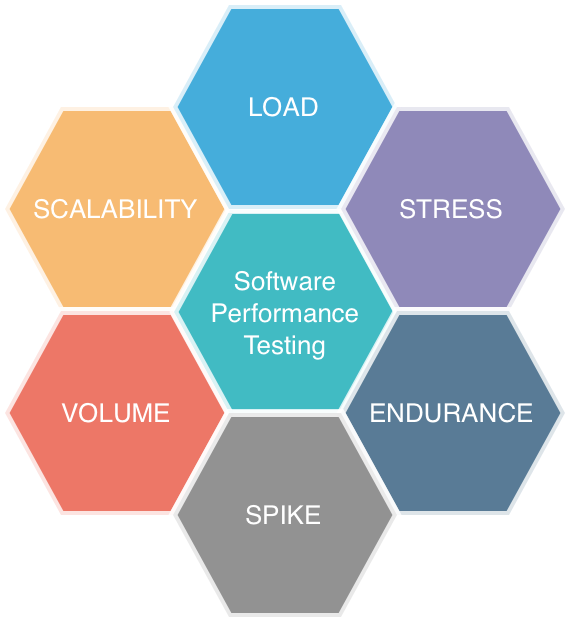

Types of Performance Tests:

- Load Testing tests how the product behaves when multiple users access it at the same time. It identifies the maximum operating capacity of a product.

- Stress Testing (or Breakpoint Testing) tests how the product behaves under extreme workloads. It determines the stability of the system. It identifies the breaking point of the product.

- Endurance Testing (or Soak Testing) tests how the product behaves under expected production load for a long period of time.

- Spike Testing tests if the product is stable during extreme increments and decrements in the load generated by users.

- Volume Testing tests how the product behaves when it is subjected to a large volume of data.

- Scalability Testing tests if the product can scale up or scale down the user load.

Source: Developedia: Software Performance Testing

Source: Developedia: Software Performance Testing

Why You Might Want the Performance Testing

Performance Testing is a useful tool to locate performance problems by highlighting where the product fails. It verifies if the product works as expected. It can be also used to compare two or more products. The goal of Performance Testing is not to find bugs but to eliminate performance bottlenecks (lessening of throughput that slower the speed, usually caused by an overloaded network).

Problems the Performance Testing Helps to Solve

How to Implement the Performance Testing

It is very important to start with a good input. Specify what you expect from the test. Collect the information during and after the testing.

Follow these steps:

- Identify the tools and environment Understand the environment where the product will be tested (hardware, software, and network configurations).

- Set acceptance criteria Set the product performance goals with all stakeholders.

- Plan and design tests Identify the test scenarios. Create one or two models.

- Configure the test environment Prepare the test environment and tools needed to monitor resources.

- Implement the test design Develop Performance Tests.

- Execute the test Run and monitor your tests.

- Analyze, repair, and retest Evaluate the data after the testing. Compare it to your expectations, repair, and run the Performance Tests again using different parameters.

Common Pitfalls of the Performance Testing

- Do not perform the tests on clients before the product is finished, that could be very risky. It could destroy the product reputation.

- Always automatize. If you do the testing manually, there is a higher risk of not detecting all the bottlenecks.

- Ensure to test correct and related parameters.

Resources for the Performance Testing

- Capterra: Performance Testing Software

- Devopedia: Software Performance Testing

- DevOps: The Art Of Software Performance Testing

- Seguetech: What Is Software Performance Testing

- TechTarget: Performance Testing

- Perpetual Enigma: Why Do We Need Performance Testing?

Was the article helpful?

Want to write for DXKB?

Feel free to contribute. People from DXKB community will be more than happy.

Prokop Simek

CEO

Related articles

ALL ARTICLES

Code Coverage

Code Coverage measures the percentage of source code lines that are covered by automated tests. If you have 90% CC, it means that 10% of the source code is not being tested at the moment.

Read moreBus Factor

A Bus Factor measures the minimum number of team members who have to be hit by a bus to put the project in jeopardy. The goal is to increase your Bus Factor as much as possible.

Read moreSmoke Testing

It is a test aimed to verify that the most important features of the product really work. This term was used during testing hardware and the product passed the test when it did not burn or smoke.

Read morePenetration Testing

Penetration Tests are performed to identify network security weaknesses. It is a "friendly cyberattack" for spotting flaws and potential vulnerabilities.

Read moreProper Bug Reporting

Proper bug reporting is a crucial practice for development. It helps to understand where the product lacks its functionality or performance. Bug reports are descriptions of bugs found by testers.

Read moreALL ARTICLES

Contribution

We are happy you want to contribute to DXKB. Please choose your preferred way